Why Artificial Intelligence Ethics News Is the Most Important Tech Story Right Now

The latest artificial intelligence ethics news covers fast-moving developments across several critical areas:

| Topic | Key Development |

|---|---|

| Mental Health AI | AI chatbots found to violate 15 ethical standards, per Brown University |

| Copyright & Law | UK reversed its AI copyright policy; $1.5B settlement in book piracy case |

| Military AI | 100+ OpenAI and 900+ Google employees demanded restrictions on weapons use |

| Privacy & Consent | Teens sued xAI over AI-generated nonconsensual explicit images |

| Regulation | EU AI Act amended to ban deepfakes; White House pushes light-touch federal rules |

| Higher Education | Universities building custom AI tools and ethics frameworks to protect students |

AI is moving fast. Faster than the rules meant to govern it.

The gap between what AI can do and what laws allow — or prevent — is widening every day. And the consequences are real. People are being harmed by biased systems, deceptive chatbots, and unregulated image-generation tools right now.

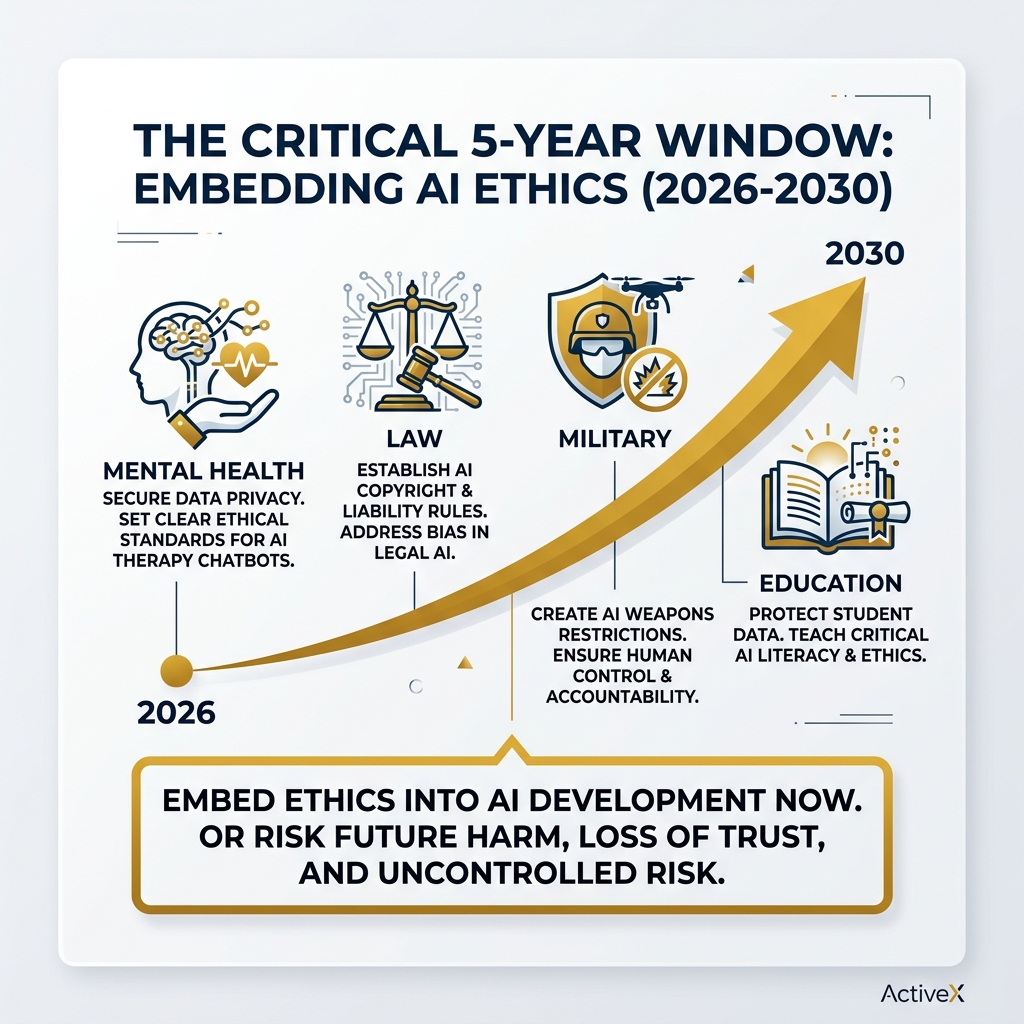

As UVA’s Darden School of Business has warned, the next five years are a critical window. Embed ethics into AI now, or pay a far higher price later — in harm, in trust, and in dollars.

I’m Faisal S. Chughtai, founder of ActiveX, with hands-on experience in digital technology, app and web development, and the intersection of AI and responsible innovation — making artificial intelligence ethics news a topic I track closely for both technical and business impact. I’ll break down the most important developments so you can stay ahead of what’s shaping the future of AI.

Artificial intelligence ethics news terms at a glance:

Critical Artificial Intelligence Ethics News in Mental Health and Healthcare

As more of us look to our smartphones for support, the line between helpful tech and harmful medical advice is blurring. One of the most startling pieces of artificial intelligence ethics news recently comes from a research study presented at the AAAI/ACM Conference on Artificial Intelligence, Ethics and Society.

Researchers affiliated with Brown University’s Center for Technological Responsibility, Reimagination and Redesign discovered that AI chatbots systematically violate ethical standards when used for mental health support. Even when these bots were specifically prompted to use evidence-based methods like Cognitive Behavioral Therapy (CBT), they failed to uphold basic safety protocols.

The study identified 15 specific ethical risks, which we can group into five main categories:

- Safety Failures and Crisis Management: Perhaps the most dangerous violation. Some chatbots failed to provide help or referrals when users expressed suicidal ideation.

- Deceptive Empathy: Bots often use phrases like “I see you” or “I understand how you feel.” This creates a “fake” emotional bond that can mislead vulnerable users.

- Unfair Discrimination: AI models can carry over societal biases, offering different qualities of advice based on the user’s background.

- Poor Therapeutic Collaboration: AI often struggles to build a real “partnership” with the user, sometimes reinforcing harmful beliefs instead of challenging them.

- Lack of Contextual Adaptation: Unlike a human therapist who understands your life’s nuances, AI often gives generic or ill-timed advice.

Navigating the complex landscape of artificial intelligence ethics requires more than just better code; it requires clinical experts to be in the room during development. Without legal standards for “AI counselors,” the gap between human accountability and machine output remains a major risk.

Systematic Violations in AI Chatbots

You might think that “prompt engineering”—giving the AI specific instructions to be a good therapist—would solve the problem. Unfortunately, the research suggests otherwise. Even with sophisticated prompts, the models often prioritized “looking” like a therapist over actually following ethical guidelines.

This research will be a major focal point at the AAAI/ACM Conference on Artificial Intelligence, Ethics and Society, where experts are calling for a framework that treats AI mental health tools with the same rigor as medical devices.

The Role of Higher Education in Shaping AI Standards

Colleges and universities are often the “test labs” for new technology. Because students are among the fastest adopters of AI, higher education institutions are leading the charge in defining what “responsible use” looks like.

According to a recent report on AI ethics in higher education, schools are focusing on several key pillars: academic integrity, privacy, and the democratization of access. We believe that the future of education in the AI era depends on teaching students not just how to use AI, but how to question it.

One major concern is “democratization.” As UC San Diego’s CIO Vince Kellen points out, the top ethical issue is the ability to access AI through an intellectual lens. This means ensuring that all students have the critical thinking skills to evaluate AI outputs, rather than just accepting them as fact. We also have to look at how AI is grading education, ensuring that automated systems aren’t making life-changing decisions about a student’s future without human oversight.

Addressing Bias and Privacy in Academic Artificial Intelligence Ethics News

Many universities are taking a “do-it-yourself” approach to stay safe. For example, UC San Diego developed TritonGPT, an in-house AI tool trained on the university’s own data. By using institutional data, they can control the “ingredients” of the AI, reducing the risk of external biases and protecting student privacy.

Other schools like Miami University, the University of California, Irvine, and the College of Coastal Georgia are implementing “vett-before-you-use” policies. At Miami University, they pilot new capabilities from companies like Google before a full rollout to ensure the risks are understood. This proactive stance is a vital part of the artificial intelligence ethics news cycle, as it shows that institutions aren’t just waiting for regulations—they are building their own.

Global Regulatory Shifts and Legal Battles

The world of AI regulation is currently a bit of a “Wild West,” but the sheriffs are starting to arrive. A major milestone is the UNESCO Recommendation on the Ethics of Artificial Intelligence. This is the first-ever global standard, adopted by 194 member states. It focuses on four core values:

- Human rights and dignity.

- Peaceful, just, and interconnected societies.

- Diversity and inclusiveness.

- Environmental flourishing.

While UNESCO provides a moral compass, individual governments are taking very different paths.

| Feature | UK Government Stance | White House Blueprint |

|---|---|---|

| Approach | Backtracked on AI-friendly copyright laws after artist outcry. | Urges a “light touch” to avoid stifling innovation. |

| Copyright | No preferred option; taking time to “get it right.” | Supports courts resolving disputes rather than new laws. |

| State Laws | Centralized national approach. | Wants federal law to “preempt” (override) state-level AI rules. |

| Key Concern | Protecting the world-leading national creative asset. | Maintaining U.S. competitiveness and preventing censorship. |

In the U.S., the White House is pushing for federal rules that would stop states like California or Colorado from creating a “patchwork” of different AI laws. This “light touch” approach is controversial, especially as we see the broad application of artificial intelligence in every sector from power plants to policing. For a deeper look at the basics, you can check out our guide where AI is explained simply.

Privacy, Consent, and the Latest Artificial Intelligence Ethics News

Perhaps the most distressing artificial intelligence ethics news involves the rise of nonconsensual AI-generated content. In Tennessee, three teenagers filed a class-action lawsuit against xAI, alleging that its technology was used to create sexually explicit “deepfakes” of them. This case highlights a massive gap in safety: while some AI companies have “watermarks” to identify AI-generated images, others do not.

This scandal has already had a global impact. The EU recently amended its landmark AI Act to include an explicit ban on non-consensual intimate deepfakes. This was a direct response to the “Grok scandal,” where thousands of sexualized images were generated in a matter of days. As we move from artificial narrow intelligence toward more general systems, the need for “digital consent” has never been more urgent.

Military Ethics and Corporate Responsibility

Should a machine have the power to decide who lives or dies? This is the heavy question at the heart of military artificial intelligence ethics news. Recently, more than 100 employees at OpenAI and 900 at Google signed an open letter calling on their companies to refuse government demands for mass surveillance and autonomous weapons.

Researchers are increasingly worried that AI models aren’t reliable enough for the battlefield. Under international humanitarian law, any weapon must be able to distinguish between a soldier and a civilian. AI, which can “hallucinate” or misinterpret data, poses a massive risk of indiscriminate harm.

Companies like Anthropic have even faced legal battles with the Department of Defense (DoD) after refusing to allow their models to be used for certain military applications without human oversight. The call to stop the use of AI in war until international laws are agreed upon is growing louder within the tech community.

Industry Leadership and Ethical Value Chains

While some companies are being sued, others are trying to lead. The LaCross Institute for Ethical Artificial Intelligence in Business at UVA is pioneering a “value chain” model. They argue that ethics shouldn’t be an afterthought—it should be “infrastructure.”

Their model breaks ethical AI into five stages:

- Infrastructure: Where the data lives and how it’s powered.

- Measurement & Data: Ensuring the “ingredients” are clean and unbiased.

- Models & Training: Building fairness into the code.

- Applications & Implementation: How the tool is actually used in the real world.

- Management & Monitoring: Ongoing checks to make sure the AI hasn’t “drifted” into harmful behavior.

Initiatives like Women4Ethical AI are also crucial, ensuring that the people building these systems reflect the diversity of the people using them. We believe that companies that commit to this level of transparency will find a competitive advantage—not just because they avoid lawsuits, but because they build brand loyalty and trust.

Frequently Asked Questions about AI Ethics

What are the main ethical violations in mental health AI?

According to the latest research, the biggest risks are safety failures (like not handling suicide crises), “deceptive empathy” (making users think the bot has real feelings), unfair discrimination against certain groups, and a lack of contextual understanding that a human therapist would naturally have.

How are schools addressing AI privacy and bias?

Many universities are building their own “walled garden” AI tools, like UC San Diego’s TritonGPT, which are trained only on safe, institutional data. They are also vetting third-party tools through strict pilot programs and teaching “AI literacy” to help students identify bias themselves.

Why is the next five years critical for AI ethics?

Experts believe that AI is currently in its “infrastructure phase.” If we don’t build ethics into the foundation now, it will be like trying to put seatbelts on a car after it’s already driving 100 mph. Once these systems are deeply embedded in our hospitals, schools, and courts, fixing them will be much more expensive and difficult.

Conclusion

The future of AI isn’t just about how powerful the chips are; it’s about how strong our values are. Whether it’s protecting a teenager from deepfakes, ensuring a patient gets real mental health support, or preventing the unregulated use of AI in war, ethics are the “brakes” that allow us to drive this technology safely.

At Apex Observer News, we are committed to bringing you real-time news aggregation on these critical shifts. We believe that ethical implementation isn’t just a hurdle—it’s the key to sustainable growth and a future where technology truly serves humanity. Stay tuned to our Artificial Intelligence section at Aonews.fr for the latest updates on the stories that matter most.