Why AI Deep Learning Is Changing Everything You Know About Artificial Intelligence

AI deep learning is a powerful branch of artificial intelligence that teaches computers to learn from data using networks of layered algorithms — much like the human brain processes information.

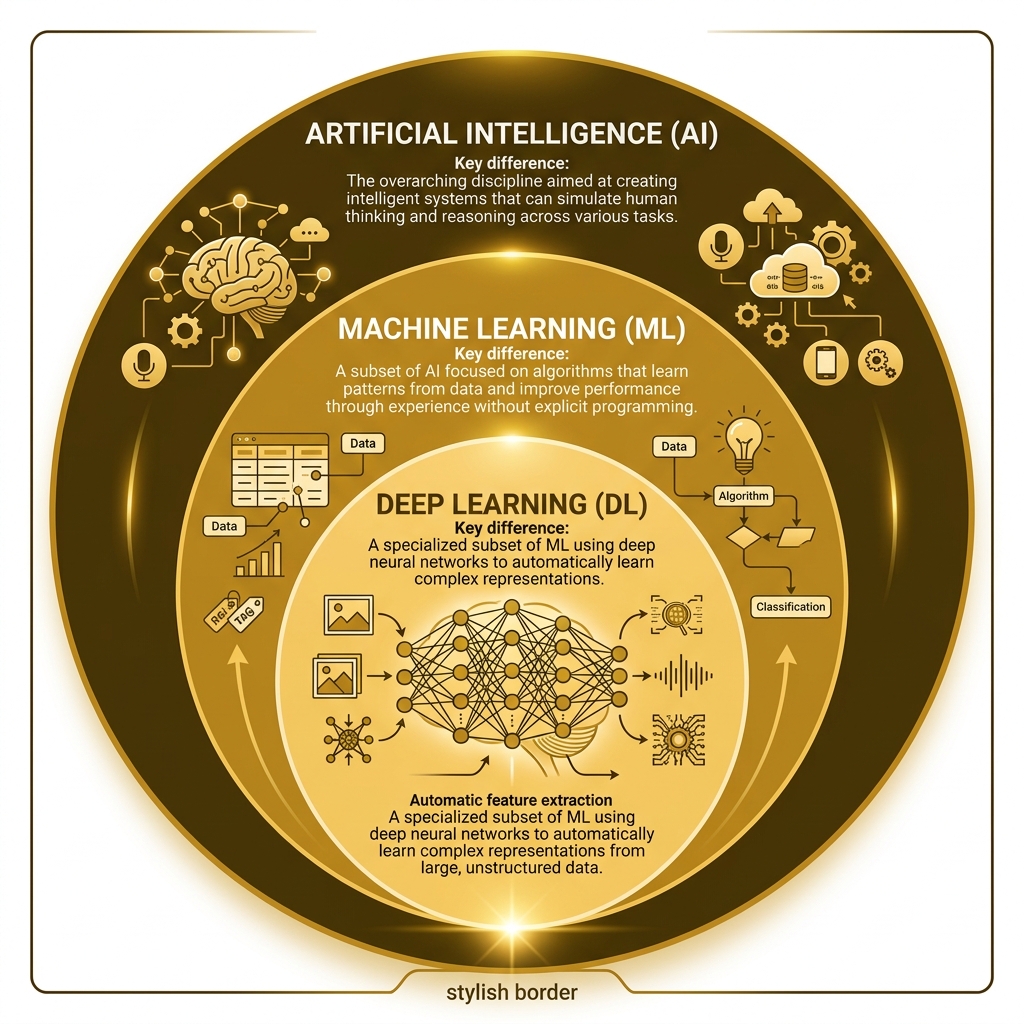

Here is a quick breakdown of how AI, machine learning, and deep learning relate to each other:

| Technology | What It Does | Human Input Needed |

|---|---|---|

| Artificial Intelligence (AI) | Broad field of machines simulating human thinking | High |

| Machine Learning (ML) | Algorithms that learn patterns from labeled data | Medium |

| Deep Learning (DL) | Neural networks that learn features automatically from raw data | Low |

In simple terms:

- AI is the big umbrella

- Machine learning sits inside AI

- Deep learning sits inside machine learning

Most of the AI you interact with daily — voice assistants, recommendation engines, image filters — runs on deep learning under the hood.

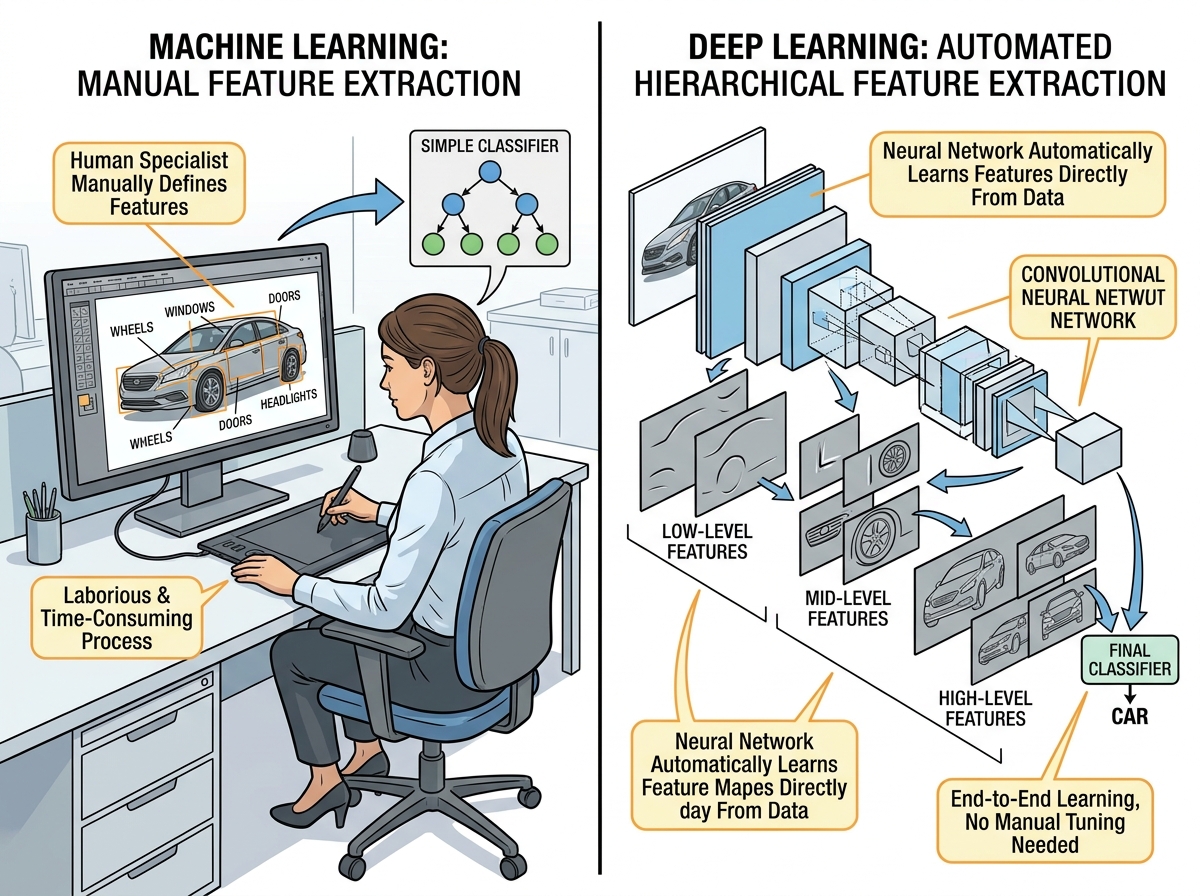

The leap from traditional machine learning to deep learning is significant. Where older systems needed humans to hand-craft features from data, deep learning models figure those features out on their own. That shift unlocked breakthroughs like self-driving cars, real-time translation, and tools that can generate images or text from a simple prompt.

Over 7 million people are already learning how to build and use these systems — and the gap between those who understand deep learning and those who don’t is only getting wider.

I’m Faisal S. Chughtai, founder of ActiveX, with hands-on experience in AI application development, digital strategy, and the technologies driving ai deep learning forward. In this guide, I’ll break down exactly how deep learning works, how it differs from machine learning, and why it matters to you right now.

Easy ai deep learning glossary:

Defining the Hierarchy: AI, Machine Learning, and AI Deep Learning

To truly understand ai deep learning, we first need to look at the family tree. We often hear these terms used interchangeably, but they represent different levels of specialized technology. Think of it like a Russian nesting doll: AI is the largest doll, Machine Learning is the one inside it, and Deep Learning is the smallest, most specialized doll at the center.

Artificial Intelligence (AI) is the broadest category. It refers to any technique that enables computers to mimic human behavior. This includes “Traditional AI,” which often relied on complex “if-then” logic and hard-coded rules. If you’ve ever played a video game where the computer opponent follows a set path, you’ve interacted with traditional AI.

Machine Learning (ML) is a subset of AI that moved away from rigid rules. Instead of being told exactly what to do, ML models are trained on data. They use statistical methods to improve at a task over time. However, traditional ML still requires a lot of “feature engineering.” For example, if we wanted an ML model to recognize a car, a human would have to manually tell the computer to look for four wheels, a windshield, and headlights.

ai deep learning takes this a step further. It uses multi-layered neural networks to automate feature extraction. In our car example, a deep learning model doesn’t need a human to define “wheels.” We just feed it millions of images of cars, and the model figures out the patterns itself. It learns that certain shapes (circles) and textures (rubber) consistently appear in “car” photos.

The “deep” in deep learning refers to the number of layers in the neural network. While a standard neural network might only have two or three layers, deep learning models often have hundreds. This depth allows them to handle massive amounts of unstructured, unlabeled data—the kind of data that makes up most of our digital world, like raw text, audio files, and video streams.

| Characteristic | Traditional AI | Machine Learning | Deep Learning |

|---|---|---|---|

| Data Requirement | Low | Medium (Labeled) | Very High (Unlabeled/Raw) |

| Feature Extraction | Hard-coded by humans | Manual engineering | Automatic |

| Hardware | Standard CPU | Standard CPU/GPU | High-performance GPU/TPU |

| Complexity | Simple rules | Statistical patterns | Neural networks |

How Deep Neural Networks Function

At its core, ai deep learning is inspired by the human brain’s neural circuits. While we aren’t literally building a brain out of silicon, we use a structure that mimics how biological neurons pass electrical signals.

A deep neural network is built from three main types of layers:

- The Input Layer: This is where the raw data enters the system. If you’re training a model to recognize handwriting, the input layer receives the pixel values of the image.

- The Hidden Layers: This is where the “magic” happens. A deep learning model is distinguished by having multiple hidden layers. In these layers, the data is transformed. Early layers might detect simple edges, while deeper layers recognize complex shapes like eyes or hooves. Some older architectures, like the restricted Boltzmann machine (RBN), paved the way for these modern multi-layered stacks.

- The Output Layer: This is the final stop. It provides the prediction or classification. For a classification model, the output might use a softmax function to give a probability score (e.g., “98% chance this is a cat”).

Inside these layers are individual units called neurons (or nodes). Each neuron has a weight and a bias. Think of the weight as the “importance” the neuron assigns to a specific piece of information. As data passes through, each neuron performs a mathematical operation called an activation function. This function decides whether the information is important enough to be “fired” or passed on to the next layer.

Research has shown that “Multilayer feedforward networks with a nonpolynomial activation function can approximate any function” (PDF), which is a fancy way of saying that with enough layers and data, these models can learn to solve almost any complex problem.

The Mechanics of AI Deep Learning Training

How does a model actually “learn”? It doesn’t just wake up knowing how to translate French to English. It goes through a rigorous training process.

First, the model makes a guess (Forward Propagation). It takes an input, passes it through the layers, and produces an output. Initially, this guess will be terrible. We then use a Loss Function to calculate exactly how wrong the model was.

To fix the error, the model uses Backpropagation. This involves working backward from the output to the input. Using the chain rule of calculus, the system calculates the “gradient”—a map showing which weights and biases contributed most to the error.

Finally, we apply Gradient Descent. This is an optimization algorithm that nudges the weights and biases in the direction that reduces the loss. We repeat this millions of times until the model’s error is as small as possible. It’s an iterative process of trial, error, and mathematical correction.

Core Architectures and Real-World Applications

Not all deep learning models are built the same way. Different problems require different “blueprints” or architectures.

- Convolutional Neural Networks (CNNs): These are the “eyes” of AI. They use a mathematical operation called convolution to scan images for patterns. CNNs are so effective that they already process an estimated 10% to 20% of all checks written in the U.S. They are the backbone of computer vision, used in everything from self-driving cars to medical imaging for cancer detection.

- Recurrent Neural Networks (RNNs): These are designed for sequential data—data where the order matters. This makes them perfect for speech recognition and natural language processing. Your phone’s autocomplete and voice assistants like Siri or Alexa rely on RNNs to understand the context of what you’re saying.

- Generative Adversarial Networks (GANs): These are used to create new data. Generative adversarial networks (GANs) consist of two networks—a “Generator” and a “Discriminator”—playing a zero-sum game. The generator tries to create fake data (like a human face), and the discriminator tries to spot the fake. They push each other to become incredibly realistic.

- Transformers: Introduced in the seminal 2017 paper “Attention is all you need” (PDF), transformers changed everything. They use a “self-attention” mechanism to weigh the importance of different parts of the input data, regardless of distance. This allowed for much larger and more capable models.

The real-world impact is massive. In healthcare, deep learning models are analyzing MRI scans to spot tumors with superhuman accuracy. In 2011, the DanNet CNN achieved superhuman performance in visual recognition contests, proving that these machines could outperform traditional human-led methods by a factor of three.

The Impact of AI Deep Learning on Generative AI

We are currently living in the era of Generative AI, and ai deep learning is the engine driving it. Generative AI doesn’t just recognize patterns; it uses those patterns to create something entirely new—images, code, music, or poetry.

This revolution is powered by Foundation Models. These are massive deep learning models trained on vast swaths of the internet. Because they are so large, they can be adapted to many different tasks. If you want to see how these models are built from the ground up, you can watch an introductory video to foundation models to see the scale involved.

The shift to Transformer-based architectures allowed models to understand long-range context. This is why a tool like ChatGPT can remember what you said at the beginning of a long conversation. It’s also why we see synthetic media—AI-generated videos and voices—becoming so realistic that they are indistinguishable from reality.

Evolution, Hardware, and Future Challenges

The history of ai deep learning isn’t a straight line. While neural network concepts have existed for decades, we lacked the “fuel” to run them. The “Deep Learning Revolution” finally kicked off in the late 2000s thanks to two things: Big Data and GPUs.

Originally designed for video games, Graphics Processing Units (GPUs) turned out to be perfect for the heavy math required by neural networks. In 2009, a 100-million parameter network was trained on 30 Nvidia GeForce GPUs, achieving training speeds up to 70 times faster than traditional CPUs. This hardware breakthrough changed the game.

Today, the NVIDIA Deep Learning Institute and cloud providers make this power accessible to everyone. You don’t need a supercomputer in your basement; you can start by creating a free AWS account and using their massive clusters of GPUs and TPUs (Tensor Processing Units) to train your own models.

Challenges and the “Black Box” Problem

Despite the success, deep learning faces significant hurdles:

- Data Hunger: These models need millions of high-quality samples. Poor data leads to “garbage in, garbage out.”

- Computational Cost: Training a state-of-the-art model can cost millions of dollars in electricity and hardware time.

- The Black Box: Deep learning models are notoriously hard to interpret. We know they work, but because they have billions of parameters, it’s often difficult to explain why they made a specific decision. This is a major concern in fields like law and medicine.

- Overfitting: This happens when a model learns the training data too well, including the noise and errors, making it fail when it sees new, real-world data.

Learning Resources for AI Deep Learning

If you’re looking to join the 7 million people already diving into this field, there has never been a better time. We recommend starting with hands-on labs and structured paths.

- NVIDIA training: Offers professional certification and hands-on experience with production-ready environments.

- IBM courses: Excellent for learning fundamental concepts and building skills with guided projects.

- AWS Management Console: A practical place to build and deploy your first AI application using managed services like SageMaker.

Professional certification in these areas is becoming a gold standard for technical careers, as businesses across every industry scramble to implement ai deep learning solutions.

Frequently Asked Questions about Deep Learning

What is the main difference between ML and DL?

The biggest difference is how they handle features. In Machine Learning (ML), a human must manually define the features the computer should look for. In Deep Learning (DL), the neural network discovers those features automatically from the raw data. DL also requires much more data and more powerful hardware than traditional ML.

Why does deep learning require GPUs?

Neural networks involve millions of simultaneous mathematical calculations (mostly matrix multiplications). A standard CPU is like a very smart person who can do one hard task at a time. A GPU is like thousands of students who can each do a simple math problem at the exact same time. This “parallel processing” is what makes deep learning feasible.

Is deep learning better than machine learning?

Not necessarily—it depends on the task. Deep learning is “better” for complex, unstructured data like images, speech, and natural language. However, if you have a smaller, structured dataset (like an Excel spreadsheet of house prices), traditional machine learning is often faster, cheaper, and easier to explain.

Conclusion

The future of ai deep learning is bright, and its impact is only beginning to be felt. From solving 50-year-old biological challenges like protein folding to predicting global weather patterns with unprecedented accuracy, deep learning is the “spark” in the new electricity of AI.

At Apex Observer News, we are committed to keeping you updated on the latest breakthroughs in this fast-moving landscape. Whether you are a curious observer or an aspiring engineer, the tools to build the future are now at your fingertips.

Ready to take the next step? Enroll Now DeepLearning.AI to start or advance your career in AI and become part of the revolution.

For more deep dives into tech, check out our latest coverage: