How Computer Vision in AI Is Reshaping Machine Perception

Computer vision in AI is a branch of artificial intelligence that gives machines the ability to interpret and understand visual information — from images and videos — much like the human eye and brain work together to make sense of the world.

Here is a quick breakdown of what it means and why it matters:

| Concept | What It Means |

|---|---|

| What it is | A field of AI that processes and analyzes visual data using machine learning |

| How it works | Cameras capture images, algorithms extract patterns, models make decisions |

| Core tasks | Object detection, image classification, segmentation, tracking |

| Key tools | OpenCV, TensorFlow, PyTorch |

| Where it’s used | Healthcare, autonomous vehicles, manufacturing, security, retail |

Think about your phone unlocking when it recognizes your face, or a self-driving car spotting a pedestrian at a crosswalk. That is computer vision at work — fast, automated, and increasingly more accurate than the human eye in many specific tasks.

The numbers back up the momentum. The AI in Computer Vision market was valued at USD 12 billion in 2021 and is projected to hit USD 205 billion by 2030, growing at a staggering CAGR of 37.05%. And in some benchmarks, like face recognition, machines already outperform humans — Google’s FaceNet hit 99.63% accuracy compared to the human baseline of 97.53%.

This guide will walk you through everything — from the basics of how machines “see,” to the deep learning models powering it, to where it’s being used right now across industries.

I’m Faisal S. Chughtai, founder of ActiveX, with hands-on experience in AI-driven application development and deploying intelligent digital solutions — including systems that leverage computer vision in AI to power smarter, faster apps. I’ll make sure this guide stays practical, clear, and genuinely useful as we dig deeper.

Discover more about computer vision in ai:

What is Computer Vision in AI?

At its simplest, computer vision in AI is the science of teaching machines to “see.” While a camera can record a video, it doesn’t inherently understand what is happening in the frame. Computer vision provides the “brain” to that “eye,” allowing a computer to identify a cat, detect a defect in a circuit board, or navigate a crowded street.

We often compare this technology to human vision, but there is a distinct difference. Human vision is a biological process that uses the eyes and the visual cortex to interpret light. Computer vision, however, relies on sensors, massive datasets, and complex mathematical algorithms. While humans are great at understanding context (we know a person behind a tree is still a whole person), machines have historically struggled with these nuances. However, thanks to A beginner guide to artificial narrow intelligence and beyond, we are seeing machines close that gap rapidly.

The Difference Between Computer Vision and Image Processing

It is common to confuse these two terms, but they serve different purposes. What is computer vision? involves interpreting the content of an image to make a decision or provide an insight. Image processing, on the other hand, is about transforming the image itself—such as sharpening a blurry photo or adjusting the brightness. Think of image processing as the “filter” and computer vision as the “analyst.”

Historical Evolution: From Cats to AlexNet

The journey of computer vision in AI began in the 1950s and 60s. One of the most famous early milestones was the 1966 “Summer Vision Project” at MIT, where researchers naively thought they could solve the problem of machine vision in a single summer by attaching a camera to a computer.

The field evolved through decades of hand-coded algorithms until 2012, when a model called AlexNet changed everything. By using a deep learning architecture on the massive ImageNet dataset (which contains 15 million labeled images), AlexNet slashed error rates in image recognition. This proved that deep learning was the future of visual AI.

How Machines Interpret Visual Data

To a computer, an image isn’t a picture of a sunset; it is a massive grid of numbers. If you look at a digital image closely, it is made of pixels. Each pixel has a value representing its color and intensity.

- Numerical Arrays: A grayscale image is a 2D array of numbers (0 to 255).

- Color Channels: A color image is typically a 3D array with three layers: Red, Green, and Blue (RGB).

- Spatial Context: Algorithms look for relationships between these numbers to find edges, textures, and eventually, complex objects.

This process follows Marr’s Computational Theory of Vision, which suggests that vision happens in stages—from a “primal sketch” of edges to a full 3D understanding of the scene.

Mathematical Prerequisites for Visual AI

If you want to understand how computer vision in AI actually functions under the hood, you have to get comfortable with math. It isn’t just about code; it’s about linear algebra and signal processing.

- Linear Algebra: Matrices and vectors are the language of images. Techniques like Singular Value Decomposition (SVD) and Principal Component Analysis (PCA) help reduce the complexity of visual data.

- Signal Processing: Concepts like Fourier Transforms and convolutions are used to filter noise and extract features.

- Eigenvalues and Eigenvectors: These are used in facial recognition (Eigenfaces) to determine the most important features of a face.

How Computer Vision Works: From Pixels to Insights

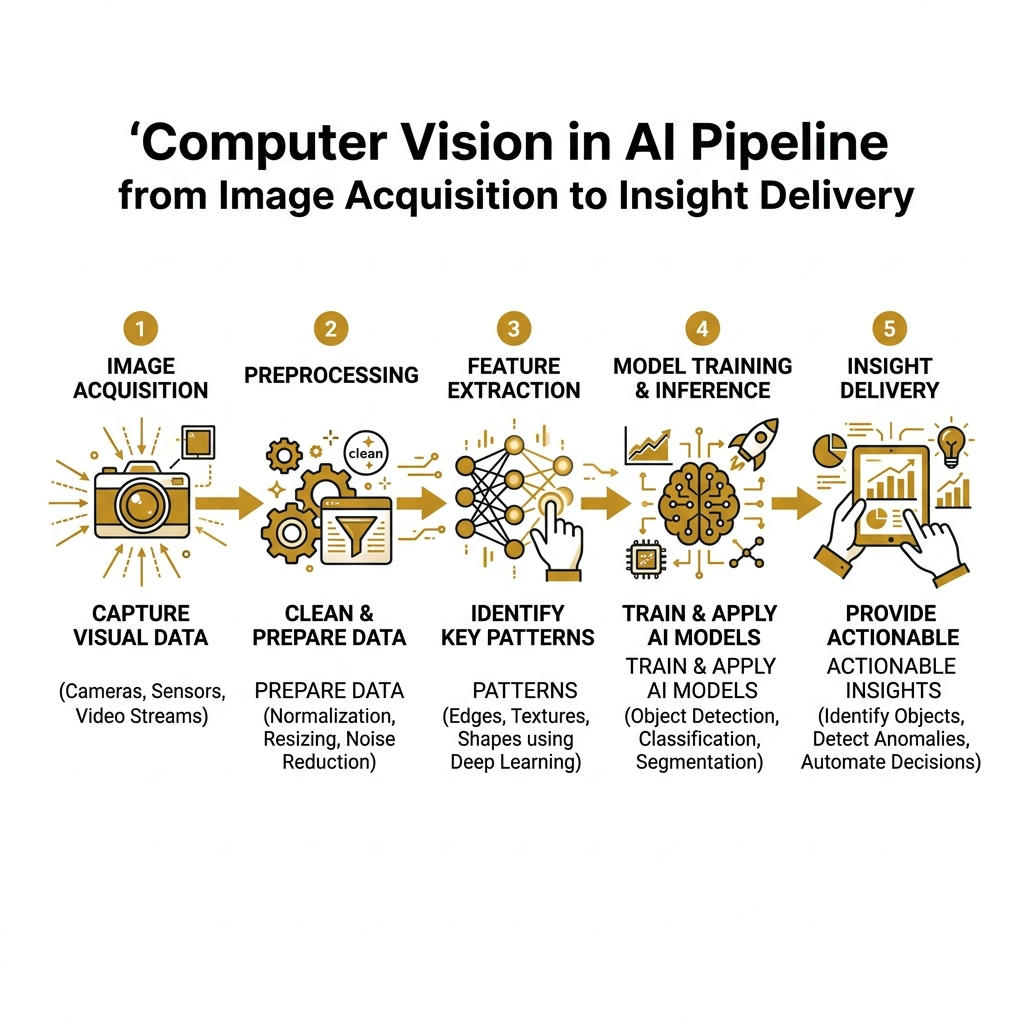

The process of turning raw pixels into actionable insights involves several critical steps. We can think of it as a pipeline:

- Image Acquisition: Capturing data via cameras, LiDAR, or scanners.

- Preprocessing: Cleaning the data. This includes noise reduction, resizing, and data augmentation (flipping or rotating images to give the AI more examples to learn from).

- Feature Extraction: The AI looks for specific “features” like corners, edges, or blobs.

- Model Inference: The processed data is fed into a neural network to make a prediction.

Comparing CNNs and Vision Transformers

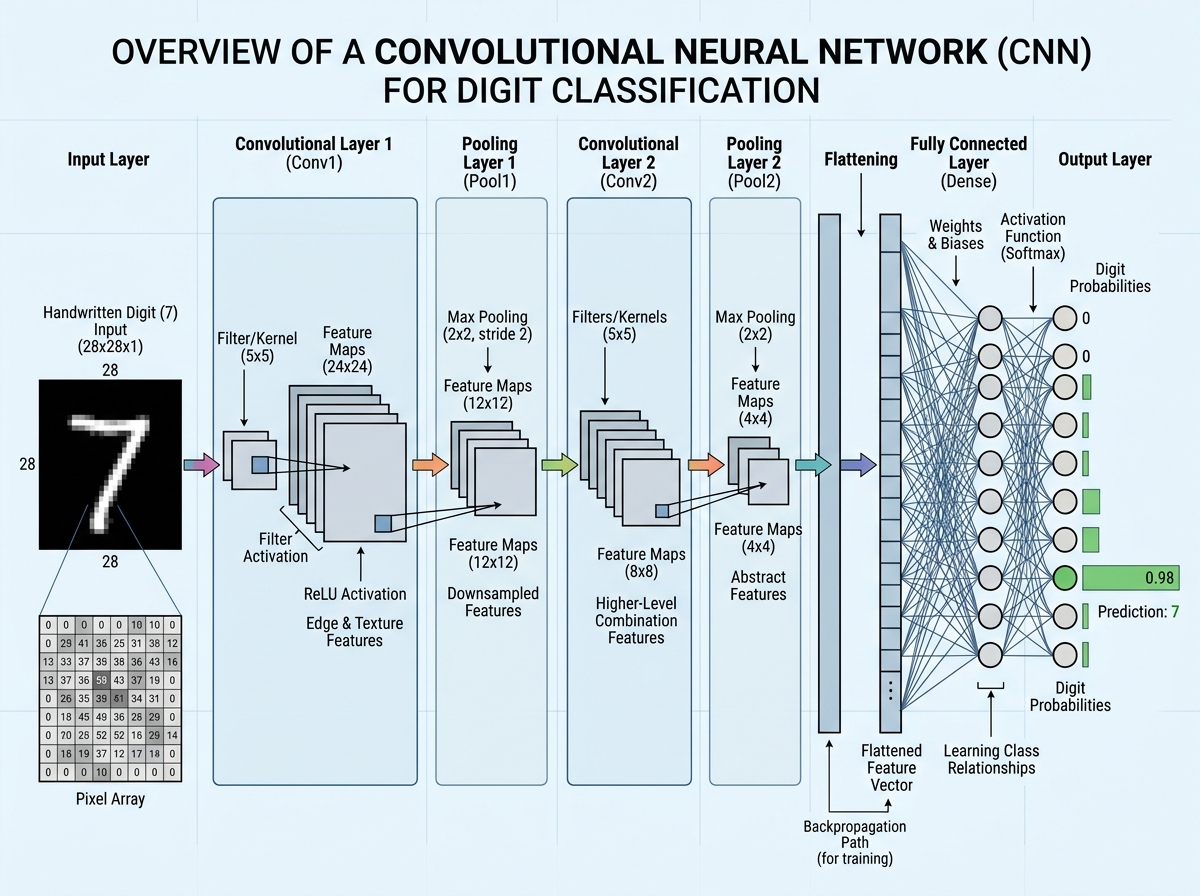

For years, Convolutional Neural Networks (CNNs) were the undisputed kings of computer vision in AI. They work by sliding “filters” over an image to find patterns. However, a new challenger has emerged: the Vision Transformer (ViT).

| Feature | Convolutional Neural Networks (CNN) | Vision Transformers (ViT) |

|---|---|---|

| Approach | Processes pixels in local neighborhoods (convolutions) | Breaks image into patches and uses “attention” |

| Data Requirement | Efficient with smaller datasets | Requires massive data to outperform CNNs |

| Global Context | Struggles with long-range relationships in an image | Excellent at seeing how distant parts of an image relate |

| Standard Use | Real-time object detection (YOLO) | High-accuracy classification at scale |

Scientific research on Vision Transformers has shown that by treating image patches like words in a sentence, we can achieve incredible accuracy, especially when we have millions of images to train on.

Core Tasks of Computer Vision in AI

To understand the world, AI performs several specialized tasks:

- Image Classification: Assigning a label to an entire image (e.g., “This is a dog”).

- Object Detection: Identifying objects and drawing a “bounding box” around them. This is how AI and robotics work together to change the world by allowing robots to navigate and interact with items.

- Image Segmentation: This is pixel-level detection. Instead of a box, the AI outlines the exact shape of the object. This is vital in medical imaging to outline a tumor.

- Object Tracking: Following a moving object across multiple frames of video.

- Optical Character Recognition (OCR): Converting printed or handwritten text into digital data.

Popular Deep Learning Architectures

Modern computer vision in AI relies on several heavy-hitting architectures:

- CNNs: The foundation of modern vision, inspired by the human visual cortex as seen in Research on Neocognitron.

- GANs (Generative Adversarial Networks): Two networks “compete” to create realistic images. This is how deepfakes and AI-generated art are made.

- YOLO (You Only Look Once): A incredibly fast model used for real-time object detection.

- VAEs (Variational Autoencoders): Used for compressing images and generating new versions of data.

To build these, developers use libraries like Keras, TensorFlow, and PyTorch, which provide the building blocks for creating these complex neural networks.

Real-World Applications of Computer Vision in AI

We encounter computer vision in AI every day, often without realizing it. Its impact spans almost every major industry.

Healthcare Diagnostics

In medicine, computer vision is literally a lifesaver. Deep learning models can now analyze X-rays, MRIs, and CT scans with accuracy that rivals or even exceeds expert radiologists. Research on medical image analysis and dermatology shows that AI can identify skin cancer with the same precision as a trained dermatologist.

Manufacturing and Quality Control

Factories use “machine vision” to inspect thousands of parts per minute. An AI system can spot a microscopic crack in a turbine blade or a missing component on a motherboard much faster and more objectively than a human inspector. This drives massive operational efficiency.

Agriculture: The High-Tech Farm

Farmers are using drones and ground robots equipped with vision systems to monitor crop health. The future of food and artificial intelligence on the farm involves AI identifying specific weeds and applying herbicide only to those spots, reducing chemical use by up to 90%.

Retail and Autonomous Shopping

The retail world is being transformed by systems like Amazon Just Walk Out technology. By using a network of cameras and computer vision algorithms, stores can track which items a customer picks up and automatically charge their account, eliminating the need for checkout lines. This is a prime example of how Artificial intelligence in e-commerce is moving from the screen to the physical world.

Industry Impact and Market Growth

The economic potential of computer vision in AI is massive. According to Verified Market Research data, the market is expected to reach $205 billion by 2030.

This growth is driven by the need for scalability. A human can only watch one security monitor at a time; an AI can watch thousands, flagging only the suspicious activity.

Tools, Trends, and Challenges in Visual AI

If you are a developer or a business leader looking to implement these systems, you need to know the landscape of tools and the hurdles you’ll face.

The Essential Toolkit

- OpenCV: The “Swiss Army Knife” of computer vision. It contains over 2,500 algorithms for everything from face detection to camera calibration.

- Scikit-image: A collection of algorithms for image processing in Python.

- Torchvision: A specialized library for PyTorch users that provides datasets and models for computer vision.

Current Trends: Edge AI and Real-Time Analytics

We are moving away from sending all visual data to the cloud. Edge AI allows the computer vision processing to happen directly on the device—like a smart camera or a drone. This reduces latency (speeding up decisions) and improves privacy because the video never leaves the device.

Hardware is also evolving. While CPUs handle general tasks, GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units) are designed specifically to handle the massive parallel math required for neural networks.

Deployment Benefits and Challenges

While the benefits are clear, deploying computer vision in AI is not without its headaches:

- Computational Requirements: Training these models requires massive power and expensive hardware.

- Data Privacy: Using facial recognition or public surveillance raises significant ethical questions. AI and ethics: Navigating the complex landscape of artificial intelligence is a crucial read for anyone deploying these systems.

- Adversarial Attacks: Sometimes, subtle changes to an image—invisible to humans—can trick an AI into misclassifying an object (like seeing a stop sign as a speed limit sign).

- Dataset Bias: If an AI is trained on a narrow set of images, it may perform poorly on diverse real-world data.

As we discuss in The strategic guide to using AI in your business today, success requires balancing accuracy with speed and ensuring your data is representative of the real world.

Frequently Asked Questions about Computer Vision

What is the difference between computer vision and image processing?

Image processing focuses on the enhancement or transformation of an image (like removing noise or changing contrast). Computer vision focuses on the understanding and interpretation of the image (like identifying that a group of pixels represents a car).

How does computer vision enable machines to see?

Machines “see” by converting images into numerical arrays. They then use neural networks—specifically layers of filters—to identify patterns, shapes, and textures. By training on millions of examples, the machine learns to associate certain patterns with specific objects or actions.

What are the most popular libraries for implementing computer vision?

The most widely used libraries are OpenCV for general tasks, and TensorFlow or PyTorch for building deep learning models. Keras is also popular for its user-friendly interface when building neural networks.

Conclusion

Computer vision in AI is no longer a futuristic concept; it is the foundation of modern automation. From the smartphone in your pocket to the medical labs saving lives, the ability for machines to interpret visual data is changing everything. As we look forward, the rise of multimodal AI—where vision is combined with language and sound—will create even more “human-like” machines.

At Apex Observer News, we are committed to keeping you at the forefront of these technological shifts. Whether it’s the latest in autonomous vehicles or breakthroughs in healthcare AI, we bring you the insights you need to navigate this fast-moving world.

Stay updated with the Latest AI news and updates to see how the world is being reimagined through the digital eye.