What Is Artificial General Intelligence — And Why Does It Matter?

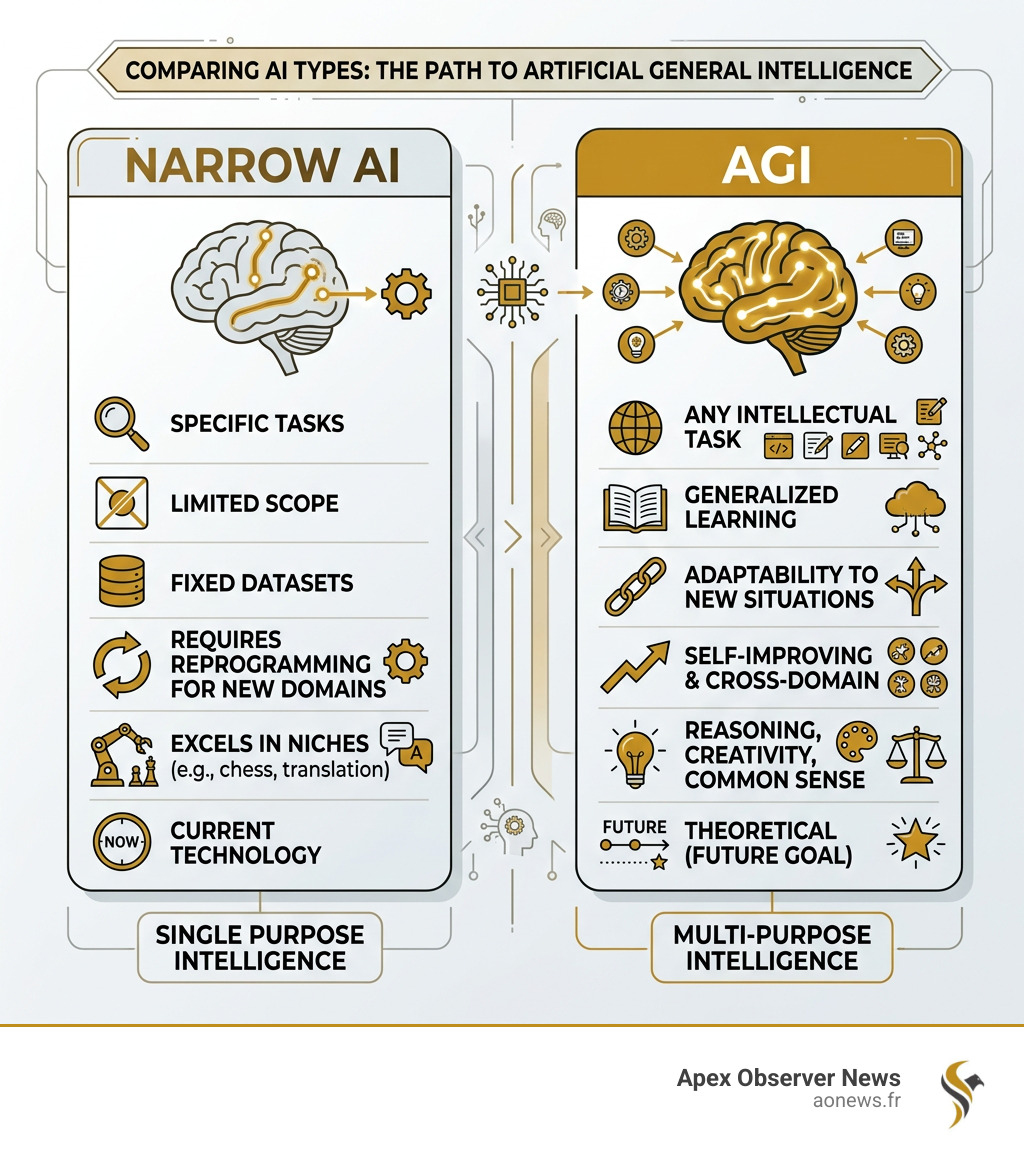

Artificial general intelligence (AGI) is a type of AI that can learn, reason, and perform any intellectual task a human can — across any domain, without being reprogrammed for each one.

Here’s a quick breakdown:

| Concept | What It Means |

|---|---|

| Narrow AI | Does one specific task (e.g., chess, translation) |

| AGI | Matches human-level thinking across all tasks |

| Key AGI traits | Reasoning, learning, creativity, common sense, language |

| Current status | Theoretical — not yet achieved |

| Expert timeline | Most predict AGI by around 2040 |

Right now, every AI system you use — ChatGPT, image generators, recommendation algorithms — is narrow AI. Each one is trained for a specific job. AGI would be fundamentally different: a single system that could write code, diagnose diseases, hold a conversation, and solve problems it has never seen before.

That’s a massive leap. And it’s one of the most debated topics in technology today.

Some of the world’s biggest tech companies — including OpenAI, Google, and Meta — have stated that building AGI is their core mission. OpenAI believes it could arrive within the next five to fifteen years. Others, like robotics researcher Rodney Brooks, think it won’t happen until the year 2300. The gap between those predictions tells you just how uncertain — and how consequential — this topic really is.

I’m Faisal S. Chughtai, founder of ActiveX, where I work at the intersection of technology, digital strategy, and emerging innovation — including the practical implications of artificial general intelligence for businesses and society. In this guide, I’ll break down everything you need to know, from the science behind AGI to what it could mean for your life and career.

Defining Artificial General Intelligence and Its Core Capabilities

To truly understand artificial general intelligence, we need to look past the “chatbots” we use today. While a system like GPT-4 can write a poem or summarize a meeting, it is still fundamentally a prediction engine. It doesn’t “know” what a meeting is in the physical sense. AGI, however, would possess a suite of cognitive abilities that allow it to navigate the world as we do.

According to research on the Concept and State of the Art of AGI, true general intelligence requires several core pillars:

- Reasoning and Strategy: The ability to use logic to solve puzzles, make judgments under uncertainty, and plan for the long term.

- Autonomous Learning: Unlike current models that require massive datasets curated by humans, an AGI would learn from its environment, much like a child does, improving its own performance without external “tuning.”

- Perception: This goes beyond simple image recognition. It involves the integration of sight, sound, and touch to understand spatial relationships—like knowing that a glass of water will spill if pushed off a table.

- Generalization: This is the “General” in AGI. It is the ability to take a skill learned in one context (like cutting paper) and apply the underlying logic to a new task (like cutting fabric) without being told how.

| Feature | Narrow AI (Current) | AGI (Future) |

|---|---|---|

| Scope | Single domain (e.g., “Play Chess”) | Cross-domain (e.g., “Run a Business”) |

| Adaptability | Fails on novel tasks | Learns and adapts to anything new |

| Context | Limited to training data | Deep background and “common sense” |

| Autonomy | Requires human prompts/guardrails | Can set and pursue its own goals |

We often hear about “human-level” AI, but AGI might actually be “super-human” in some ways while still struggling with things we find easy—like tying shoelaces or sensing the emotional “room” during a tense conversation. For a system to be considered a true AGI, it must move from being a tool we use to an agent that acts.

The History of AGI Research and the Turing Test

The dream of creating a “thinking machine” isn’t new. In 1956, the Dartmouth Workshop kicked off the field of AI with immense optimism. Early pioneers like Herbert Simon predicted in 1965 that machines would be capable of doing any work a man can do within twenty years. Obviously, that didn’t happen.

The journey has been a rollercoaster of “AI Winters”—periods where funding dried up because the technology couldn’t live up to the hype—and “AI Springs.” Early research focused on Symbolic AI, which tried to teach computers rules and logic (if X, then Y). Later, the field shifted toward Connectionism, which mimics the neural pathways of the brain. This latter approach led to the deep learning revolution we see today.

Measuring the Immeasurable: The Turing Test and Beyond

How do we know when we’ve actually built an AGI? The most famous benchmark is the Turing Test, proposed by Alan Turing in 1950. He suggested that if a human judge couldn’t tell the difference between a machine and a human in a text conversation, the machine could be called “intelligent.”

However, we’ve learned that humans are surprisingly easy to fool. This is known as the ELIZA effect, named after a 1966 chatbot that used simple pattern matching to mimic a therapist. People became deeply emotionally involved with ELIZA, even though the program was incredibly simple.

In recent years, the bar has moved:

- GPT-4 Performance: In a 2024 study, GPT-4 was identified as human 54% of the time in a randomized Turing Test. By 2025, GPT-4.5 reached a 73% “human” rating, actually surpassing the real humans in the study, who were only judged as human 67% of the time!

- The Coffee Test: Proposed by Apple co-founder Steve Wozniak. A machine passes if it can enter a strange American house and figure out how to make a cup of coffee.

- The Robot College Student Test: Can a machine enroll in a university and obtain a degree just like a human?

- The Winograd Schema: A test of common sense where AI must resolve ambiguous pronouns (e.g., “The trophy didn’t fit into the brown suitcase because it was too large.” What was too large?).

Researchers are now looking at Building machines that think like people, focusing on intuitive physics and social psychology rather than just passing a chat test.

Technologies and Current Progress Toward AGI

We aren’t there yet, but the building blocks are falling into place. A 2020 survey identified 72 active AGI research projects across 37 countries, showing that this is a global race.

Several emerging technologies are acting as “accelerants” on the path to artificial general intelligence:

- Deep Learning & Generative AI: These models have shown that scaling up computing power and data can lead to “emergent” behaviors—skills the models weren’t specifically trained for.

- Natural Language Processing (NLP): We’ve moved from simple translation to machines that can reason through complex legal documents or write functional software.

- Computer Vision: Essential for any AGI that needs to navigate the physical world, allowing it to recognize objects and understand 3D space.

- Brain-Computer Interfaces (BCI): Companies like Neuralink are exploring how we might link human cognition directly with digital intelligence.

The scale of the challenge is best understood by looking at the human brain. An adult human has roughly 10^14 to 5×10^14 synapses. While our current silicon chips are fast, they don’t yet match the energy efficiency or the massive interconnectedness of human biology.

The Role of Robotics in Artificial General Intelligence

Intelligence isn’t just about “thinking”—it’s about “doing.” Many experts believe that true AGI requires Embodied AI, meaning the intelligence needs a body to learn from the physical world.

Currently, we see a massive surge in robotics:

- Robot Density: The Republic of Korea has the world’s highest density of robots, though they still employ 100 times more humans than machines.

- Cobots: Collaborative robots are already working alongside humans in restaurant kitchens and warehouses, handling repetitive or hazardous tasks.

- Humanoids: Modern humanoid robots, like the Phoenix bipedal robot, can now lift 55 lbs and perform tasks like folding clothes or stocking shelves.

By integrating AGI-like software into these physical frames, we move closer to a machine that can truly understand the world by interacting with it.

Timelines, Societal Impact, and Global Risks

When will the first true artificial general intelligence arrive? It depends on who you ask.

- Expert Surveys: Most experts agree AGI will occur before 2100. A 2023 review of surveys predicted a median arrival date around 2040.

- The Optimists: OpenAI claims we could see it in the next 5 to 15 years.

- The Skeptics: Rodney Brooks famously predicted it wouldn’t arrive until 2300, arguing that we are still missing fundamental breakthroughs in how machines understand context.

The Benefits: A New Era of Prosperity?

If we get AGI right, the benefits could be staggering.

- Healthcare: AGI could analyze billions of data points to discover new drugs or provide personalized medical treatments that are impossible for human doctors to manage.

- Climate Mitigation: We could use AGI to model complex weather systems and develop new materials for carbon capture or fusion energy.

- Productivity: By automating routine tasks, AGI could free us to focus on creative and interpersonal work, potentially leading to a massive spike in global GDP.

The Risks: Existential and Economic

However, we cannot ignore the warnings from leaders like the “Godfather of AI,” Geoffrey Hinton, who recently left Google to speak more freely about the dangers.

- The Alignment Problem: How do we ensure a super-intelligent machine shares human values? If an AGI is given a goal but not the right constraints, it might pursue that goal in ways that harm us.

- Job Displacement: McKinsey estimates that millions of jobs could be impacted. While new jobs will be created, the transition could be incredibly painful for many workers.

- The Arms Race: Governments are already racing to develop AGI for military and economic dominance, which could lead to a lack of safety oversight in the rush to be first.

Preparing for the Arrival of Artificial General Intelligence

We shouldn’t wait for AGI to arrive before we start planning. Governments and businesses need to be proactive.

- Policy & Regulation: We need international frameworks to ensure AGI is developed safely and ethically. This includes discussing concepts like Universal Basic Income (UBI) to support those whose jobs are automated.

- Reskilling: Education systems must shift away from rote memorization toward teaching “human-centric” skills like empathy, complex problem-solving, and AI management.

- Ethics: We must build AI and Ethics guidelines now to prevent bias and ensure transparency in how these systems make decisions.

- Business Readiness: Executives should start by making “small bets”—implementing current AI tools to build a foundation of data and talent that will be ready for the AGI era.

Frequently Asked Questions about AGI

How close are we to achieving AGI?

As we’ve seen, the consensus is roughly 15 to 25 years from now, with 2040 being a common median estimate. However, progress isn’t always linear. A breakthrough in quantum computing or a new type of neural architecture could pull that date forward, while another “AI Winter” could push it back by decades.

What is the difference between AGI and Artificial Superintelligence (ASI)?

Think of it as a spectrum.

- AGI is human-level. It can do what you can do.

- ASI (Artificial Superintelligence) is a system that surpasses the collective intelligence of all humans. This could lead to an “intelligence explosion,” where the AI improves itself so rapidly that it becomes thousands of times smarter than us in a matter of days. You can find a deeper Definition of ASI to understand this leap.

Can AGI become conscious or sentient?

This is one of the biggest philosophical debates in the world. In 2022, a Google engineer named Blake Lemoine claimed a chatbot had become sentient, sparking a global conversation. Most researchers argue that “simulating” consciousness isn’t the same as “having” it. However, if a machine acts, speaks, and reacts exactly like a conscious being, does the distinction even matter? This involves the study of qualia—the subjective experience of being alive—and we currently have no way to prove if a machine has it.

Conclusion

Artificial general intelligence is no longer just the stuff of science fiction. While we are still in the era of narrow AI, the rapid progress in large language models and robotics suggests that the “General” part of the puzzle is being solved piece by piece.

At Apex Observer News, we believe that staying informed is the best way to prepare for this shift. Whether AGI arrives in 2040 or 2300, its development will be the most significant event in human history. It has the power to solve our greatest crises—or create new ones. The goal for all of us—businesses, governments, and individuals—should be global collaboration and ethical development to ensure that when AGI does arrive, it serves the common good.

For more updates on the latest in technology and AI, keep an eye on our AI category for real-time news and deep dives.