The Fundamental Link Between Artificial Intelligence Machine Learning

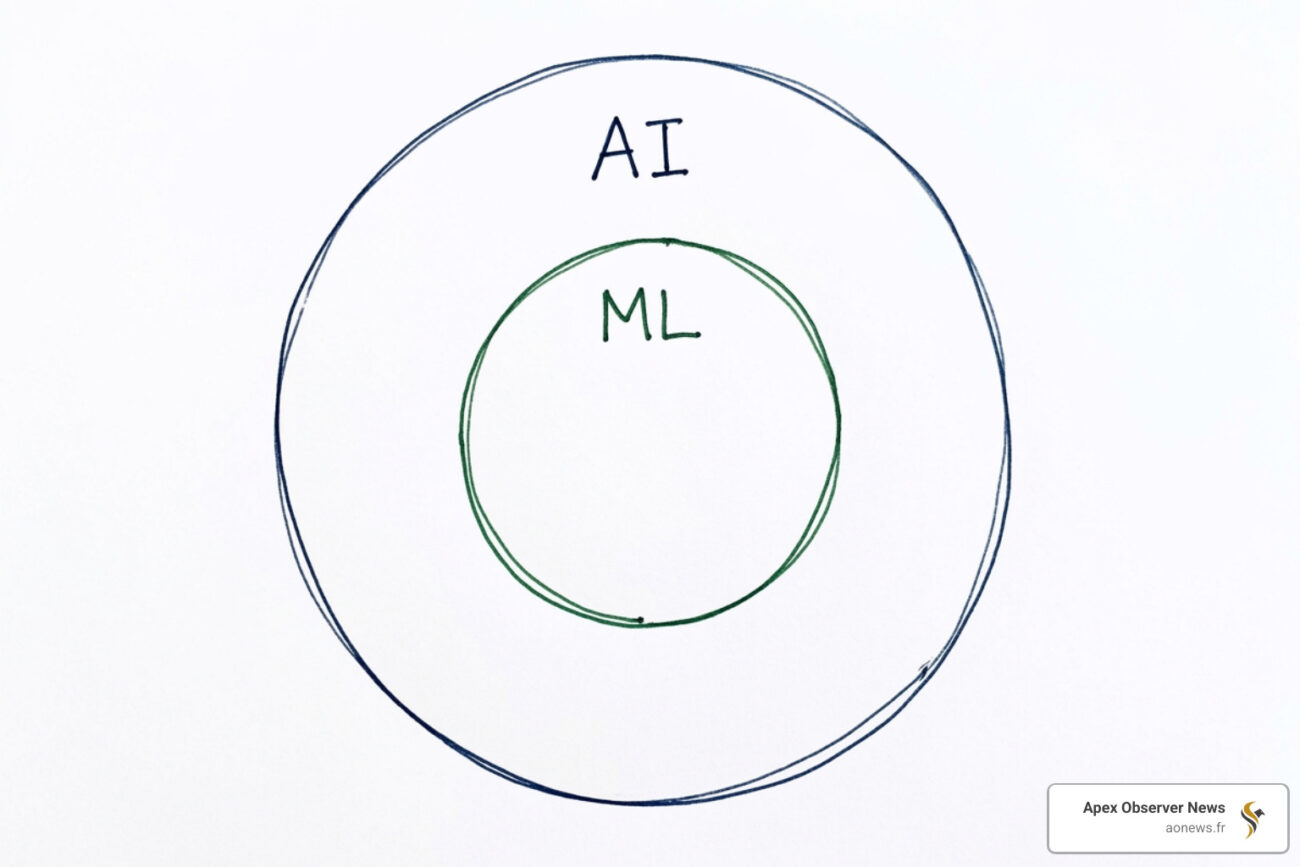

To understand the connection, we have to look at the hierarchy. Imagine a set of Russian nesting dolls. The largest doll is Artificial Intelligence (AI). Inside that doll is a smaller one called Machine Learning (ML). Inside that is an even smaller one called Deep Learning.

At its most basic level, AI is the capability of a computational system to perform tasks we usually associate with human intelligence—things like reasoning, problem-solving, and perception. However, there are two ways to get a machine to “act” smart.

The first way is through explicit programming. This is the “old school” AI. If you’ve ever played a video game where a non-player character (NPC) follows a strict path or reacts to you using “if-then” logic, you’ve seen this in action. The programmer writes every single rule. If the player does X, the computer does Y.

The second way is Machine Learning. Instead of giving the computer a list of rules, we give it a massive pile of data and an algorithm. The computer then finds the rules itself. As Oracle explains in their research on the difference between AI and ML, the primary distinction lies in how the system achieves its “intelligence.”

| Feature | Rule-Based AI (Non-ML) | Machine Learning (ML) |

|---|---|---|

| Logic Source | Human-coded rules (If/Then) | Data-driven patterns |

| Adaptability | Rigid; stays the same until updated | Improves with more data |

| Best Use Case | Simple, predictable tasks (GPS) | Complex, messy data (Face ID) |

| Programming | Explicitly programmed | Trained on examples |

In short, artificial intelligence machine learning represents a shift from “telling a computer what to do” to “teaching a computer how to learn.” We are moving away from rigid code and toward mathematical relationships that evolve over time.

A Journey Through Time: Key Milestones in AI and ML

We often think of AI as a 21st-century invention, but the groundwork was laid decades ago. The term “Artificial Intelligence” was officially born in 1956, with the “Dartmouth Summer Research Project on Artificial Intelligence”. Led by John McCarthy and other pioneers, the goal was to see if every aspect of learning or intelligence could be so precisely described that a machine could be made to simulate it.

While McCarthy was defining the umbrella term, others were looking for specific ways to make it work. In 1959, Arthur Samuel published a groundbreaking paper titled “Some Studies in Machine Learning Using the Game of Checkers”. He didn’t want to program every possible checkers move; instead, he wrote a program that played against itself thousands of times. By watching which moves led to wins, the program “learned” to play better than Samuel himself. This was the birth of the term “Machine Learning.”

The history of artificial intelligence machine learning hasn’t always been a straight line up. We’ve had “AI Winters”—periods where the hype outpaced the technology, leading to a loss of funding and interest. However, the resurgence of neural networks in the late 2000s, combined with the massive processing power of modern GPUs and the explosion of “Big Data,” has brought us into the golden age we see today.

How Machines Actually “Learn” Without Explicit Programming

The Mechanics of Artificial Intelligence Machine Learning

If we aren’t writing rules, how does the computer actually figure things out? It starts with training data. Think of this as the textbook for the machine. If we want a model to recognize a cat, we don’t tell it “a cat has pointy ears.” Instead, we show it 10 million pictures of cats.

The algorithm looks for statistical patterns—specific arrangements of pixels that appear frequently in cat photos but not in dog photos. It uses parameters (internal variables) and weights (the importance given to certain features) to make a guess. If the guess is wrong, the system adjusts its weights and tries again. This is an iterative process.

One famous example of this “self-optimization” is the k-means clustering algorithm. You can actually see this in action through this animation of k-means clustering optimization, which demonstrates how an algorithm iteratively moves its center points to better group data on its own. It’s not being “told” where the groups are; it’s finding them through math.

Main Types of Learning Models

Not all learning happens the same way. We generally categorize artificial intelligence machine learning into three main styles:

- Supervised Learning: This is like a student with a teacher. We give the machine “labeled” data (e.g., “This is an image of a car; this is an image of a tree”). The machine learns to associate specific features with those labels. This is the most common type used today for things like email spam filters.

- Unsupervised Learning: Here, the machine has no labels and no teacher. It looks at a giant pile of data and tries to find hidden structures. For example, association algorithms in e-commerce are used by companies like Amazon to notice that people who buy “charcoal” also tend to buy “marshmallow skewers,” even if no one told the computer those items were related.

- Reinforcement Learning: This is learning through trial and error. The machine receives “rewards” for good actions and “penalties” for bad ones. This is how robots learn to walk or how systems like AlphaGo learned to defeat world champions.

Deep Learning and the Neural Network Hierarchy

Scaling Artificial Intelligence Machine Learning with Deep Networks

You’ve likely heard the term “Deep Learning.” This is the high-octane version of machine learning. It relies on Artificial Neural Networks, which are loosely inspired by the biological neurons in the human brain.

These networks consist of an input layer, an output layer, and several “hidden layers” in between. When a network has many hidden layers, we call it “Deep.” This architecture allows the machine to handle incredibly complex tasks. For instance, research on Convolutional Neural Networks (CNNs) has shown how these layers can automatically extract features from images, starting with simple edges and building up to complex objects like faces.

The magic of these networks is backed by the Universal Approximation Theorem, which suggests that a neural network with enough layers can essentially mimic any continuous mathematical function. This is why AI can now write poetry, generate art, and drive cars—it’s approximating the complex “functions” of human behavior.

Real-World Applications and Industry Use Cases

Artificial intelligence machine learning is no longer a lab experiment; it’s a tool we use to solve massive problems.

- Healthcare: One of the most exciting frontiers is medical imaging. We are now predicting cancer risk via imaging using tools that can spot markers of illness in mammograms or X-rays that the human eye might miss.

- Finance: Banks use ML for financial fraud detection, analyzing your spending patterns in real-time. If you usually buy coffee in Paris and suddenly someone tries to buy a laptop in Las Vegas with your card, the ML model flags it in milliseconds.

- E-commerce: Recommendation engines on sites like Netflix or Spotify are perhaps the most visible use of ML. They don’t just know what you liked; they know what people like you liked.

- Transportation: Autonomous vehicles use a mix of computer vision (AI) and reinforcement learning (ML) to navigate traffic and recognize obstacles.

For more on how these tools are changing specific sectors, check out our guides on artificial intelligence in e-commerce and the application of artificial intelligence across various fields.

Challenges, Ethics, and the Future of Intelligent Systems

As we integrate artificial intelligence machine learning into our lives, we face significant hurdles. The most pressing is algorithmic bias. Because machines learn from human data, they can inherit our prejudices. If a hiring AI is trained on data from a company that historically only hired men, the AI might learn to “downrank” resumes that include the word “women’s.”

We must actively use strategies to fight machine learning bias, such as carefully vetting training data and ensuring diverse teams are building these systems.

Another challenge is explainability. Deep learning models are often “black boxes”—we know they work, but we don’t always know why they made a specific decision. This has led to a push for a right to explanation in legal frameworks, especially in the EU, where citizens can demand to know how an algorithm decided their loan eligibility or insurance premium.

Finally, we must consider the impact on the workforce. While some worry about will your job be next with AI taking over, many experts believe AI will augment human roles rather than replace them entirely. The goal is “human-centered AI”—technology that helps us work better, not just faster.

For a deeper dive into these moral questions, see our article on AI and ethics.

Frequently Asked Questions about AI and ML

Is Machine Learning a subset of AI or an independent concept?

Machine learning is strictly a subset of AI. Think of AI as the destination (creating intelligent machines) and ML as the primary vehicle we currently use to get there. As the MIT Center for Collective Intelligence notes, ML has become the most important way most parts of AI are done in the last decade.

What infrastructure and tools are needed to implement these solutions?

Implementing artificial intelligence machine learning requires three things:

- Data: High-quality, cleaned datasets.

- Hardware: High-performance GPUs or cloud computing power.

- Software: Most practitioners use Python programming due to its vast libraries. Leading frameworks like TensorFlow and PyTorch provide the pre-built modules needed to create neural networks without starting from scratch.

Can Artificial Intelligence exist without Machine Learning?

Yes. AI can exist as “Symbolic AI” or “Expert Systems.” These rely on hard-coded rules and logic. A calculator is a very simple form of non-ML AI; it “knows” how to do math, but it never “learns” to do it better. It just follows the rules programmed into its chips.

Conclusion

We’ve come a long way from Arthur Samuel’s checkers program in 1959. Today, artificial intelligence machine learning is the backbone of the modern digital world. It’s helping us drive safer, diagnose diseases earlier, and even find our next favorite song.

At Apex Observer News, we understand that staying informed about these technological shifts is vital. As we move toward a future of even greater automation and smarter systems, we’ll be here to curate the latest headlines and keep you updated on the technology innovations changing in 2025 and beyond.

Whether it’s using AI in your business today or exploring how AI is contributing to web development, the journey of continuous learning is one we are taking together.

Stay curious, stay informed, and keep watching this space for the next breakthrough.

Ready to see how AI is shaping the world? Explore our Artificial Intelligence category for more real-time news and guides!